Agents Reason.

Sertainly Decides.

AI agents are probabilistic. Business decisions must be deterministic. Sertainly gives your agents versioned decision APIs they call via REST or MCP — sub-millisecond verdicts, full trace, zero inference at runtime.

No SDK required · Any agent framework · Curl-compatible

Why agents need a deterministic decision layer

LLMs reason probabilistically. When your agent needs to approve, deny, route, or escalate — probabilities aren't good enough. Sertainly is the deterministic layer between your agent and the real world.

Determinism

Same case + same rules version = identical verdict, every single time. No model variability. No prompt drift. No “it said yes yesterday and no today.” Version-pin a decision package and your agent's decision logic is locked until you explicitly promote a new version.

Pin artifacts per environment:expense_policy@2.1.0 in prod, @2.2.0-rc1 in staging.Observability

Every verdict comes with a trace_id, fired statement list, derived values, and citations back to the source document. Debug “why did the agent do that?” with evidence — not by replaying prompts and hoping the model behaves the same way twice.

Speed

Sertainly evaluates compiled rules IR — no embedding search, no retrieval, no LLM inference at decision time. Evaluations run in sub-millisecond time, making Sertainly suitable for high-volume, real-time agentic workflows where RAG latency would be a showstopper.

RAG: 500ms–3s per decision. Sertainly: <1ms. At 10,000 decisions/day, that's hours of agent wait time eliminated.Cost

Sertainly uses AI once — at compile time — to extract rules and generate the artifact. After that, evaluations are pure computation: no tokens, no inference, no per-decision AI bill. The cost model is flat and predictable.

AI is used once at compile time, not on every runtime evaluation. Cost per decision approaches zero at scale.Prompt-based guardrails are probabilistic. Policy-based guardrails are deterministic.

RAG is powerful for research and guidance. But when your agent needs to make an authoritative decision — approve or deny, route or escalate — you need rules enforced outside the prompt, not inside it.

RAG approach

- Non-deterministic: same question, different answers across runs, model versions, or document ranking changes

- Expensive per decision: embedding search + retrieval + LLM inference on every call — cost scales linearly with volume

- Slow: retrieval latency + inference latency on every evaluation — 500ms to 3s per decision

- Audit black box: “why” requires reconstructing model behavior from logs — not reproducible

- Silent rules drift: document or embedding changes silently alter decisions without an explicit version bump

- Fills gaps with confidence: missing data is guessed, not surfaced — your agent acts on invented facts

Sertainly approach

- Deterministic: same case + same rules version = identical verdict, always — no model variability

- Near-zero cost per decision: compiled IR, no embedding search, no inference at evaluation time

- Sub-millisecond: single REST call, no retrieval pipeline, no LLM invocation at decision time

- First-class trace: every verdict links to fired statements, source citations, and a replay token

- Explicit versioning: rules changes create new pinnable versions; rollback is instant

- Structured missing data:

needs_infosurfaces exactly which fields are absent — no hallucinated gap-filling

The difference isn't just conceptual — it affects latency, cost, determinism, and auditability at runtime.

| Dimension | RAG / Runtime AI | Sertainly |

|---|---|---|

| Determinism | Low–Medium | High |

| Per-decision cost | Scales with tokens + retrieval | Near-zero (compiled IR) |

| Latency | ~500ms–3s (retrieval + inference) | Sub-millisecond evaluation |

| Missing data | Fills gaps — silently, confidently | needs_info + required_fields[] |

| Auditability | Reconstruct from logs | First-class trace + source citations |

| Rules versioning | Implicit (doc change = silent drift) | Semver, pinnable per environment |

| AI at runtime | Every call — retrieval + inference | None — AI used only at compile time |

| Best for | Advice, exploration, guidance | Authoritative enforcement |

The winning pattern: use an LLM for natural language understanding and planning, then call Sertainly for the authoritative verdict. LLMs reason; Sertainly decides.

needs_info One more thing RAG can't do: tell you what it's missing

Sertainly makes missing information a first-class outcome. When required fields are absent, the engine returns needs_info with an explicit required_fields[] array. Your agent loops back, asks the user exactly the right questions, and re-evaluates. No guessing. No hallucinated field values. Guaranteed termination because the schema is finite.

Rules (YAML) — REQUIRE statement

Agent loop (pseudo-code)

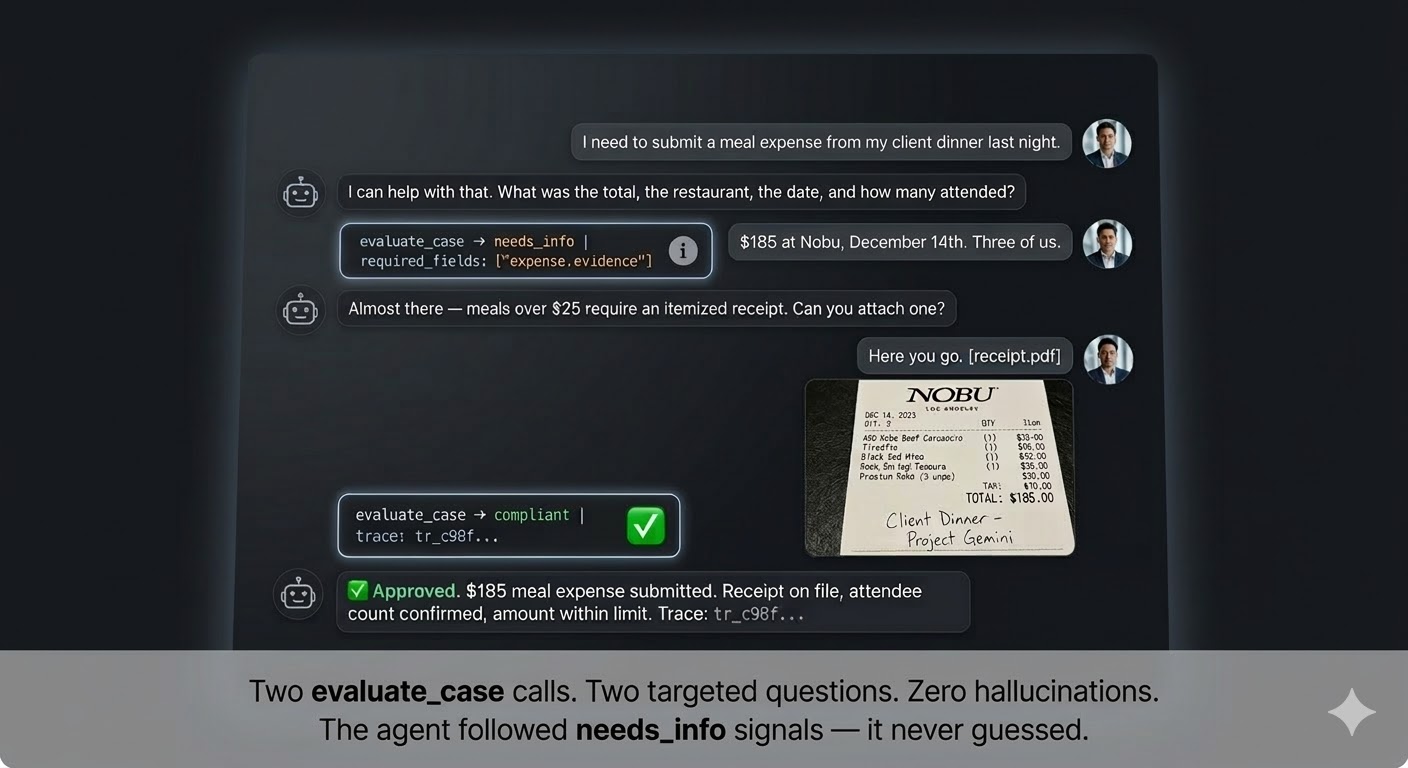

See it live: a Sertainly-powered expense agent

A real agent conversation powered by Sertainly. The agent uses needs_info to collect only what's required, then returns a deterministic verdict. No hallucinations. No over-asking.

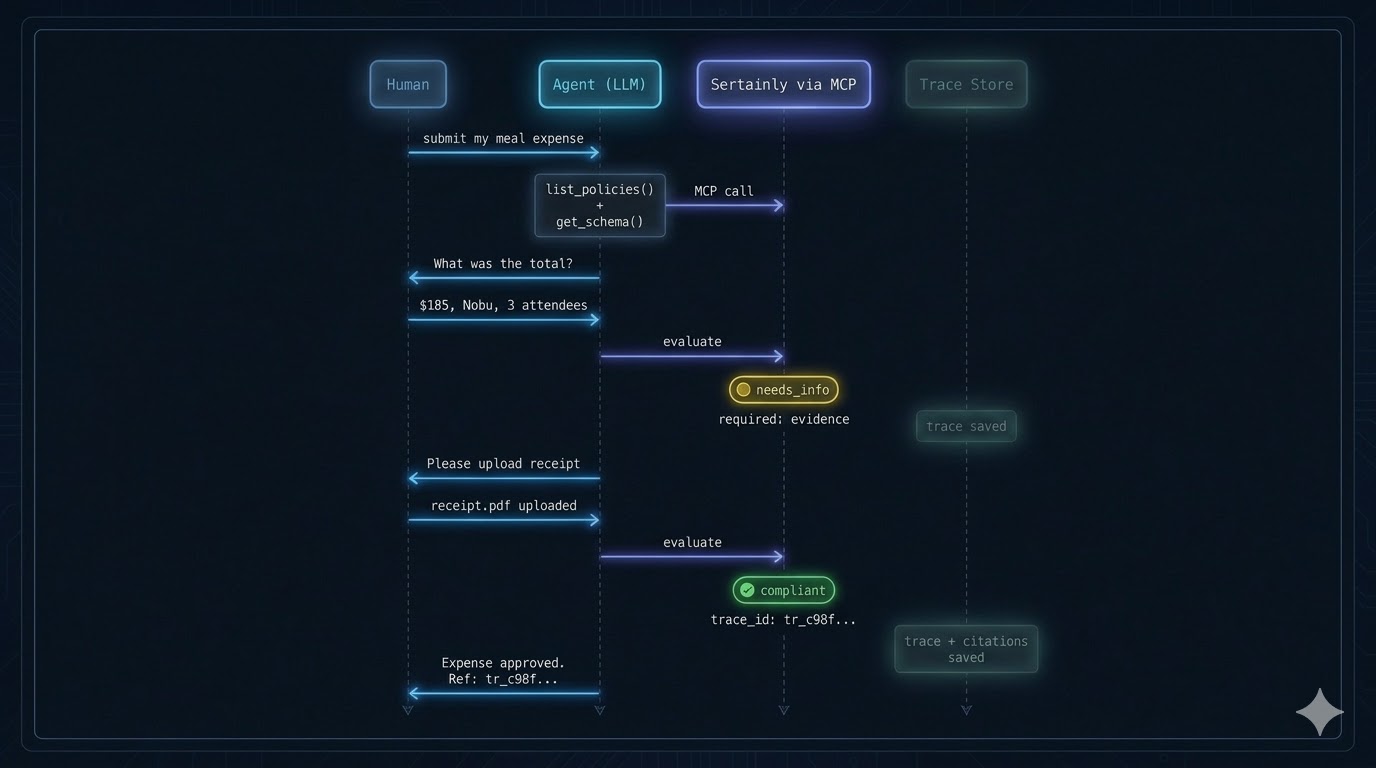

Your agent calls decisions as MCP tools

Sertainly exposes four MCP tools: list_policies, get_schema, evaluate_case, and get_trace. Your agent discovers and calls them natively — no custom integration code.

list_policies

Discover available rules, versions, and effective dates. Agent uses this to select the correct rules to evaluate against.

Input: none · Output: [{package_id, version, effective}]get_schema

Returns JSON Schema for the case — what fields are required, their types, and allowed enums. Drives the agent's question generation.

Input: package_id, version · Output: JSON Schemaevaluate_case

Submit the case. Returns verdict, reason_codes, required_fields (when needs_info), and a trace_id. The core decision call.

Input: case, package_id · Output: verdict, reason_codes, trace_idget_trace

Retrieve the full audit trail for any evaluation. Returns fired statements, derived values, source citations, and the execution profile used.

Input: trace_id · Output: entries[], statements_evaluated, citationsDeterministic agent-to-agent handoffs with ROUTE

Multi-agent systems need more than routing logic written in LLM prompts — they need routing logic that is versioned, auditable, and identical across every run. The ROUTE statement produces a needs_review verdict with a structured to destination. Your orchestrator reads that destination and hands the case to the right specialist agent — deterministically, every time.

Sertainly Rules ROUTE statements (YAML)

- Higher-priority ROUTE can override lower-priority with

override: true sla_hourstravels with the verdict for downstream SLA tracking- Multiple ROUTE statements compose — the highest-priority applicable one wins

Orchestrator Reading the route destination

- No prompt-based routing — the rules decide, not the LLM

- The receiving agent gets the full trace: what triggered the route and why

- Routing logic is versioned with the rules — update routing rules without touching orchestrator code

Why not just prompt-route?

When routing is in an LLM prompt, the routing logic changes every time the prompt changes — silently, without a version bump. With ROUTE statements in Sertainly, routing logic is part of the decision package. It's versioned, testable, and replayable. Promoting a new rules version is the only way routing logic changes.

Use for: compliance triage, approval chains, specialist escalation, human-in-the-loop gates.Trace continuity across agents

Every needs_review verdict carries a trace_id that the receiving agent can pass to get_trace. The specialist agent sees exactly which ROUTE rule fired, what data triggered it, and the full case at the moment of handoff — no context reconstruction required.

The five verdicts — and what to do with each

Every evaluate_case call returns exactly one of five verdicts. Each is a first-class signal your agent can act on deterministically — not a probability score to interpret, but a structured instruction to follow.

compliant The rule passed — proceed

The case satisfied the evaluated rule. ALLOW matched, REQUIRE was satisfied, or LIMIT was not exceeded. The agent can take the proposed action.

Agent action: approve, execute, or confirm. Log the trace_id for audit.non_compliant The rule failed — block

FORBID matched, REQUIRE failed, or LIMIT was exceeded. The case must not proceed. Reason codes tell the agent (and user) exactly what was violated.

Agent action: block the action, explain the violation to the user using reason_codes.needs_info Missing data — ask

Required fields or evidence are absent. The engine cannot evaluate the rule without them. The agent must collect the missing data and re-submit.

Agent action: ask the user for exactly the fields inrequired_fields[], then re-evaluate.needs_review Route it — don't decide

The rules explicitly route this case for human or specialist-agent review. Produced by ROUTE statements.

Agent action: hand off to the destination inroute_to — human queue or specialist agent.no_change Annotated only — no effect

A TAG statement fired and added classification labels, but did not produce a compliance outcome. Use for audit tagging and downstream routing metadata.

Agent action: readtags[] for downstream context. The overall case verdict is set by other statements.Execution Profiles Control which verdicts the engine can produce

Pass a profile in the evaluate request to restrict which statement types are evaluated and how missing data is handled. Three reference profiles ship out of the box.

| Profile | Statement types evaluated | Missing data behaviour | Best for |

|---|---|---|---|

FULL_ENFORCEMENT (default) | DEFINE, ALLOW, FORBID, LIMIT, REQUIRE, ROUTE, TAG | enforce — returns needs_info / needs_review | Production agents making authoritative decisions |

CONSTRAINT_CHECK | DEFINE, ALLOW, FORBID, LIMIT, TAG | ask — prefers needs_info | Pre-flight checks before submitting a full case |

ADVISORY_PERMISSIBILITY | DEFINE, ALLOW, FORBID, TAG | ignore — skips unevaluable statements | Guidance mode — “would this likely be allowed?” |

Quickstart: evaluate your first case in 5 minutes

No SDK required. Sertainly is a REST API — if you can curl, you can integrate. Here's the complete flow from zero to verdict.

Don't want to write rules by hand? Upload a source document and let AI extract, compile, and test the decision package for you. You review and approve — then everything below applies.

Get your API key

Create a free account. Copy your API key from Settings → API Keys. No credit card required.

Discover available rules

Call list_policies to see what's available. Returns policy IDs, versions, and effective dates.

Fetch the schema (know what to collect)

Before sending a case, get the schema to understand what fields are required. This is what drives agent question generation.

Evaluate a case

Send the case. You'll get a verdict, reason codes, and a trace ID. If the verdict is needs_info, the required_fields array tells you exactly what's missing.

Handle needs_info in your agent loop

When fields are missing, Sertainly returns needs_info with a required_fields array. Loop back, collect the missing data, and re-evaluate.

- Loop terminates because

required_fieldsis finite and schema-bounded - Each partial call returns a trace_id — full audit trail even for incomplete attempts

- Add

"profile": "CONSTRAINT_CHECK"to the body for a “what would be missing?” dry-run mode

Fetch the trace (optional, for audit or debug)

Every evaluation produces a trace. Use the trace_id to retrieve fired statements, derived values, source citations, and the effective execution profile.

Add it to your MCP server (optional)

Expose list_policies, get_schema, evaluate_case, and get_trace as tools in your MCP server. Your LLM agent can discover and call them natively — no custom orchestration code needed.

- Works with Claude, GPT-4o, Gemini — any MCP-compatible agent

- Agent reads the tool description to understand when and how to call it

- Chain multiple rule sets: eligibility → pricing → approval in one agent turn

Agent workflow patterns

Patterns builders are shipping today. Each is enabled by deterministic verdicts — not possible with prompt-based guardrails alone.

Iterative Case Completion

Agent loops on needs_info until all required fields are present, then gets a final verdict. Guaranteed termination. Zero hallucinated field values. Works over multi-turn chat, voice, or async forms.

required_fields[]Deterministic Action Gates

Agent proposes an action (refund, approval, write operation). Sertainly evaluates before anything is executed. If non-compliant or needs-review, the action is blocked and reason codes are returned — not a probabilistic “probably no.”

Key verdict: non_compliant · needs_reviewRisk-Based Routing

Low-risk cases (compliant) proceed autonomously. Medium-risk (needs_review) get routed to a human queue. High-risk (non_compliant) are blocked. One set of rules, three lanes.

to: APPROVAL_QUEUERules-as-Contract Testing

Write test cases as JSON. Replay them against new rules versions before promoting to production. Diff reason codes across versions to catch behavior changes — not in prod, in CI.

Key tool:get_trace · replay token on every verdictPlans

Decisions are billed per evaluation. AI features use separate credits. Start free, scale when you need to.

Free

Build and test on shared infrastructure. No credit card required.

10,000 decisions / month

- 1 decision package

- Shared runtime

- CLI + REST + MCP

- 7-day trace retention

- 200 AI credits / month

- Community support

Professional

For teams shipping agentic workloads in production.

250,000 decisions / month

- Unlimited packages

- Dev / Staging / Prod environments

- Team access + CI publish tokens

- 30-day trace retention

- Replay + export

- 500 AI credits / month

- Email support

Enterprise

Governance, certified builds, and private runtime for mission-critical agents.

Custom decision volume

- Dedicated / private runtime

- Governance workflows + certified builds

- Signed artifacts + audit export

- SSO / RBAC

- Custom retention + VPC / on-prem

- Included AI build capacity

- SLA + priority support

Agents reason. Sertainly decides.

Give your agents deterministic guardrails they call as tools. No prompt engineering. No inference at runtime.